Dialogue Agent Consistency Evaluation

Evaluating chatbot language models

This work was done as a course project for Stony Brook University’s CSE 538 Natural Language Processing, Fall 19. The motivation behind this project was to understand where persona based chatbots could go wrong while interacting with a user. We wanted to do this quantitatively, running multiple trial episodes.

Abstract:

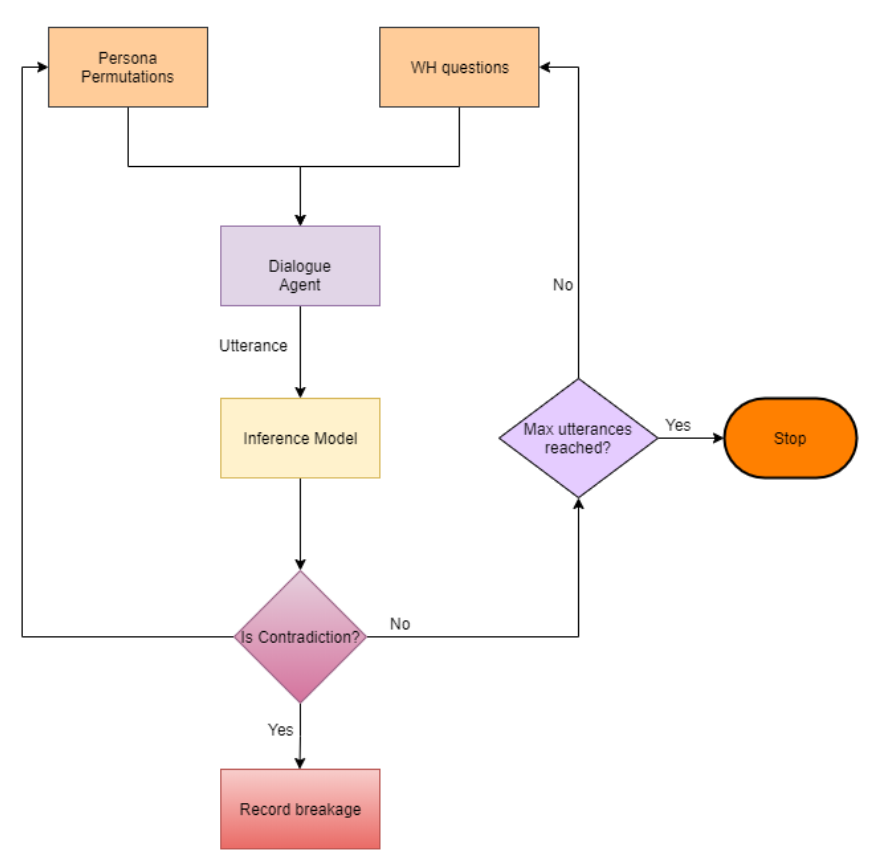

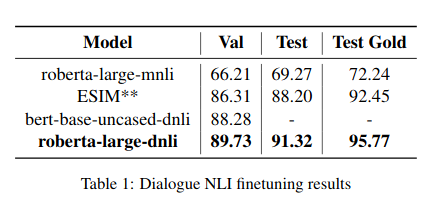

Persona based neural conversational models are important in the world of chatbots and to test the consistency of such models is integral for them being performant. We present an automated mechanism to detect the breaking point of such models, specifically targeting “TransferTransfo” (Wolf et al., 2019b). Natural language inference is used to calculate a metric stating the average number of utterances required to break a persona based model. An extensive comparison between and random-sequenced-inputs and personality-sentences-permuted-inputs has been performed.